I am a human-computer interaction researcher. My research spans social computing and human-AI interaction. The running thread across my work is a commitment to pluralism. The recognition that people's values, goals, and contexts are irreducibly diverse, and that computing systems, from social media feeds to generative AI, too often flatten that diversity in ways that backfire. For systems designed to help a user accomplish their goals, this flattening simply produces worse outcomes. A system that misreads who you are cannot effectively serve what you need. But even for systems with explicitly designed pro-social goals (say helping people learn), the path to those goals looks different depending on who the user is and their context.

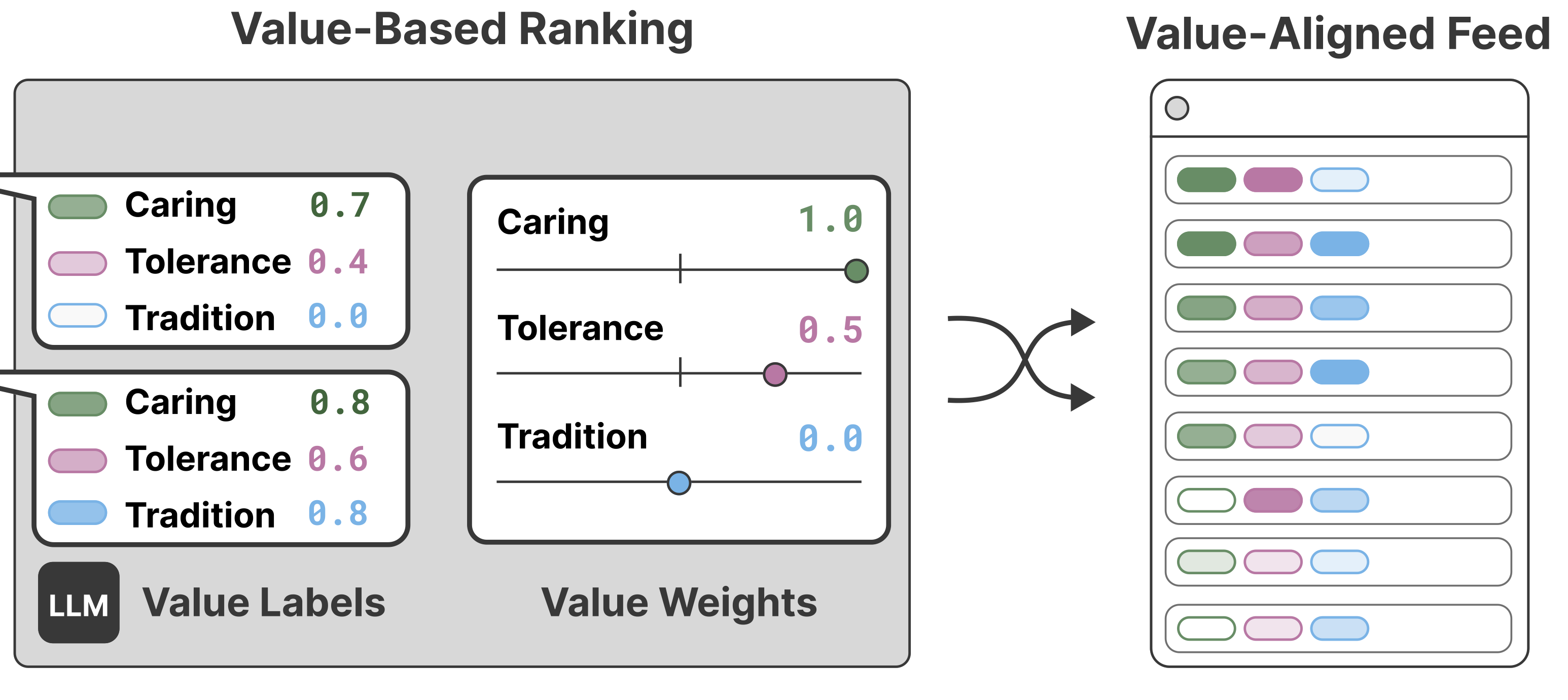

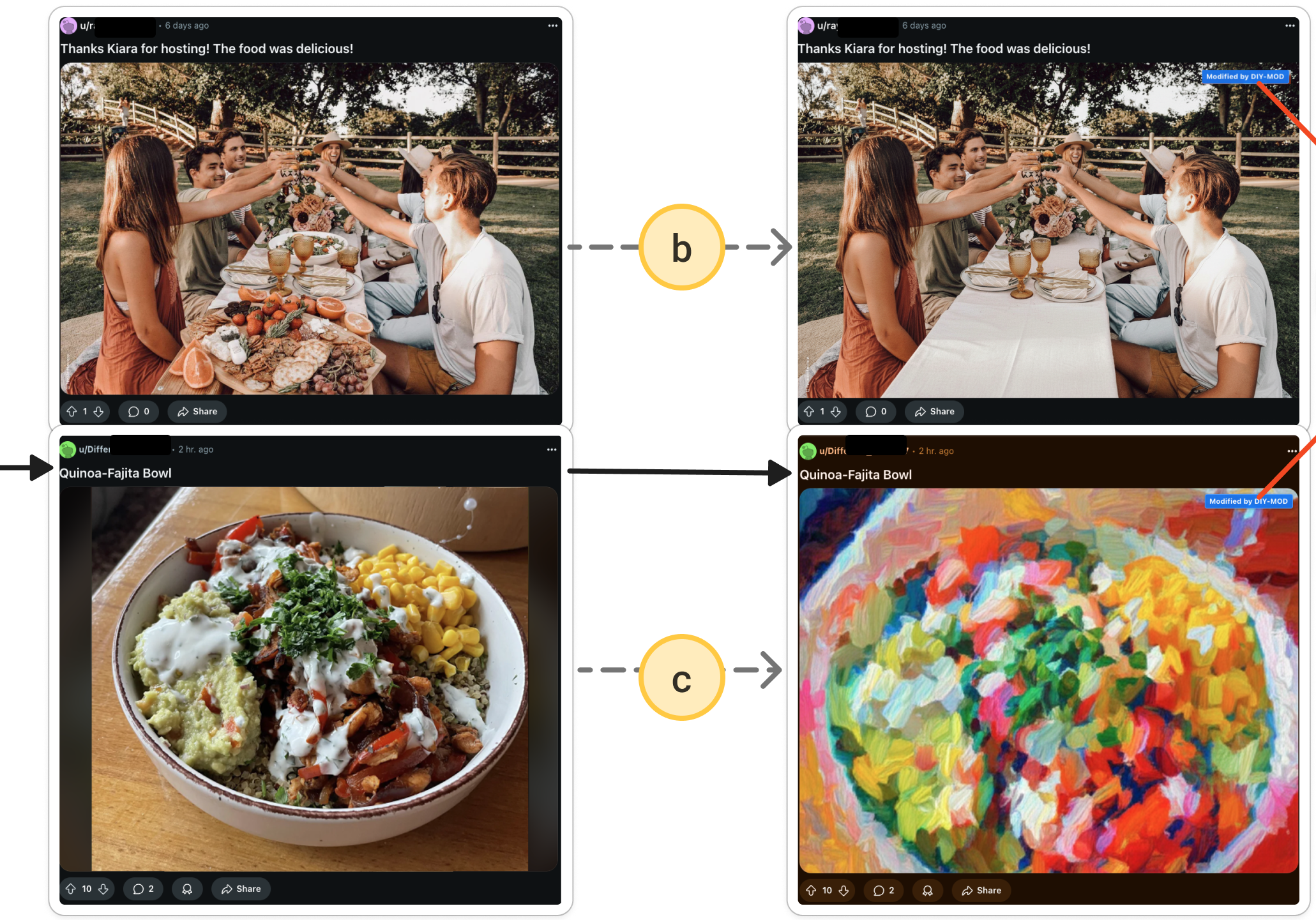

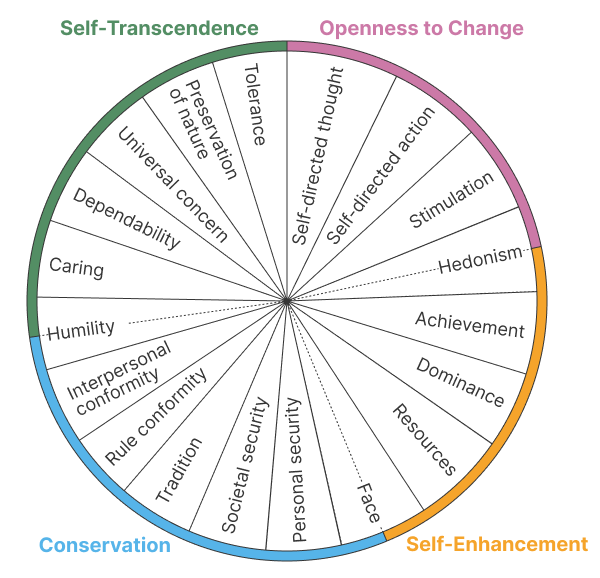

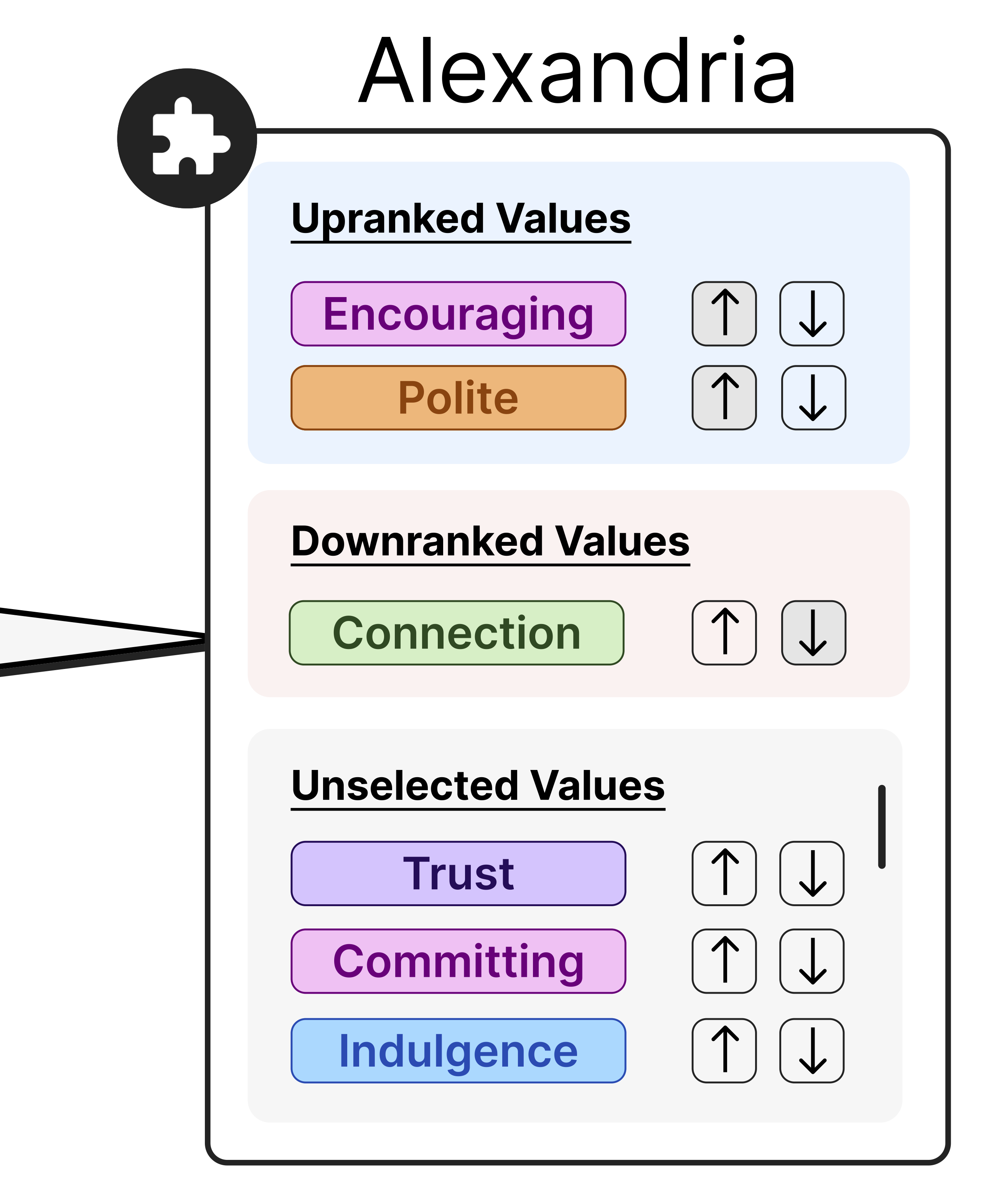

Here's what this looks like across a few domains: In social media contexts, I've examined how content moderation, algorithmic feed curation, and misinformation interventions are handled in ways that are disconnected from the actual diversity of users, and I have built systems that let users shape how content is filtered, curated, and surfaced for them. In generative AI, I work on aligning AI behavior to individual users rather than aggregate norms. In both cases, designing for pluralism can be thought of as a form of personalization, but one where the objective is accountable to user needs rather than platform interests or centralized defaults. Sometimes designing for pluralism means systems that surface users' own complexity back to them in legible form so that they can act on it. For instance, reflecting to users how their values and communication shift across different relationships so they can better manage their self-expression.

News

- Apr 2026 Congratulations to Gabriel Koo on being selected as a finalist at the CSE Annual Undergraduate Research Symposium for his work Value Faces: Surfacing How Self-Presentation Shifts Across Relationships!

- Mar 2026 Congratulations to both Rayhan Rashed and Larnell Moore on receiving the MIDAS Empowering Research with AI Award — Rayhan for Beyond Binary Moderation: Transforming Online Safety through Generative AI and Larnell for DotRAG: Retrieval-Time Relational Path Reasoning in Knowledge Graphs!

- Mar 2026 Our paper What If Moderation Didn’t Mean Suppression? A case for Personalized Content Transformation led by Rayhan Rashed received a Best Paper Honorable Mention at CHI 2026!

- Mar 2026 Co-organized a campus-wide workshop on AI and sustainability with Rada Mihalcea and Mohammed Ombadi, bringing together researchers across computer science, climate science, urban planning, public policy, and more to exchange ideas on how AI can support sustainability goals!

- Feb 2026 Congratulations to Rayhan Rashed on receiving the UM Social Impact Engineering Award for his work on personalized content transformation as a form of moderation!

- Oct 2025 Congratulations to Rayhan Rashed on receiving the Best Poster Award at the UMich Human-Centered AI Symposium for his work on personalized content transformation as a form of moderation!!

- Oct 2025 Congratulations to Rayhan Rashed on receiving the Best Application of AI Award at the UMich AI Symposium for his work on personalized content transformation as a form of moderation!!

Publications

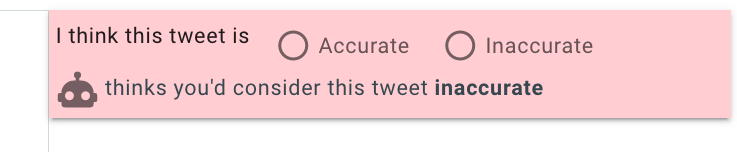

What If Moderation Didn't Mean Suppression? A Case for Personalized Content Transformation

CHI 2026 Best Paper Honorable Mention

Paper · Code · Project Site

farnaz@umich.edu

Assistant Professor

Computer Science and Engineering

School of Information (By courtesy)

University of Michigan

CV · Google Scholar

Teaching

PhD Students

- If you are a prospective PhD student: please indicate your interest in working with me in the UMICH CSE PhD application, as well as in your research statement.