Homework Assignment #2 — Test Automation

In this assignment you will use tools to automatically create high-coverage test suites for different programs.

You may work with a partner for this assignment. If you do you must use the same partner for all sub-components of this assignment.

Start Early

Professors often exhort students to start assignments early. Many students wait until the night before and then complete the assignments anyway. Students thus learn to ignore "start early" suggestions. This is not that sort of suggestion.

However, once running, the tools are completely automated. Thus, you can start them running overnight, sleep and ignore them, and wake up to results.

This means that even though the assignment may not take hours of your active personal attention, you must start it days before the due date to be able to complete it in time.

Installing, Compiling and Running Legacy Code

It is your responsibility to download, compile, and run the subject programs and associated tools. Getting the code to work is part of the assignment. You can post on the forum for help and compare notes bemoaning various architectures (e.g., windows vs. mac vs. linux, etc.). Ultimately, however, it is your responsibility to read the documentation for these programs and utilities and use some elbow grease to make them work.

Subject Programs and Tools

There are two subject programs for this assignment. The programs vary in language, desired test type, and associated tooling.

PNG Graphics (C) + American Fuzzy Lop

The first subject program is libpng's pngtest program, unchanged from Homework 1. This reuse has two advantages. First, since you are already familiar with the program, it should not take long to get started. Second, you will be able to compare the test cases produced by the black-box tool to the white-box test cases you made manually.

The associated test input generation tool is American Fuzzy Lop, version 2.52b. A mirror copy of the AFL tarball is here, but you should visit the project webpage for documentation. As the (punny? bunny?) name suggests, it is a fuzz testing tool.

You will use AFL to create a test suite of png images to exercise the pngtest program, starting from all of the png images provided with the original tarball.

AFL claims that one of its key advantages is ease of use. Just follow along with their quick start guide. Extract the AFL tarball and run "make". Then change back to the libpng directory and re-compile libpng with a configure line like:

$ CC=/path/to/afl-gcc ./configure --disable-shared CFLAGS="-static"

Note that you are not using "coverage" or gcov for this homework assignment. I recommend re-extracting the libpng tarball into a new, separate directory just to make sure that you are not mistakenly leaving around any gcov-instrumented files. We only want AFL instrumentation for this assignment.

After that I recommend making a new subdirectory and copying pngtest and all of the test images (including those in subdirectories) to it. You can either seed AFL with all of the default images or with all of your manually-created test images (from HW1); both are full-credit options. Move those images into a further "testcase_dir" subdirectory and then run something like:

$ /path/to/afl-fuzz -i testcase_dir -o findings_dir -- /path/to/pngtest @@

Note that findings_dir is a new folder you make up: afl-fuzz will puts its results there.

Note that you must stop afl-fuzz yourself, otherwise it will run forever — it does not stop on its own. Read the Report instructions below for information on the stopping condition and knowing "when you are done".

Note also that you can resume afl-fuzz if it is interrupted or stopped in the middle (you don't "lose your work"). When you try to re-run it, it will give you a helpful message like:

To resume the old session, put '-' as the input directory in the command

line ('-i -') and try again.

Just follow its directions.

Note that afl-fuzz will likely abort the first few times you run it and ask you to change some system settings (e.g., echo core | sudo tee /proc/sys/kernel/core_pattern, echo core >/proc/sys/kernel/core_pattern etc.). For example, on Ubuntu systems it often asks twice. Just become root and execute the commands. Note that sudo may not work for some of the commands (e.g., sudo echo core >/proc/sys/kernel/core_pattern will fail because bash will do the > redirection before running sudo so you will not yet have permissions, etc.) — so just become root (e.g., sudo sh) and then execute the commands in a root shell.

The produced test cases are in the findings/queue/ directory. They will not have the .png extension (instead, they will have names like 000154,sr...pos/36,+cov), but you can rename them if you like.

While AFL is running, read the technical whitepaper to learn about how it works and compare the techniques it uses to the basic theory discussed in class.

JSoup (Java) + EvoSuite

The second subject program is jsoup (v 1.11.2), a library for extracting real-world HTML data using DOM, CSS and jquery-like methods. A copy of the version of the source code known to work for this assignment is available here; you can also use git clone https://github.com/jhy/jsoup.git. It involves about 18,000 lines of code spread over 60 files. This program is a bit small for this course, but comes with a rich existing test suite. This existing test suite will serve as a baseline for comparison.

The associated test input (and oracle!) generation tool is EvoSuite, version 1.0.5. Mirror copies of evosuite-1.0.5.jar and evosuite-standalone-runtime-1.0.5.jar are available, but you should visit the project webpage for documentation.

EvoSuite generates unit tests (cf. JUnit) for Java programs.

You can install jsoup and use cobertura to assess the statement and branch coverage of its built-in test suite:

$ unzip jsoup-1.11.2.zip

$ cd jsoup-master/

$ mvn cobertura:cobertura

...

[INFO] Cobertura: Saved information on 253 classes.

Results :

Tests run: 648, Failures: 0, Errors: 0, Skipped: 11

...

[INFO] Cobertura Report generation was successful.

[INFO]

------------------------------------------------------------------------

[INFO] BUILD SUCCESS

$ firefox target/site/cobertura/index.html

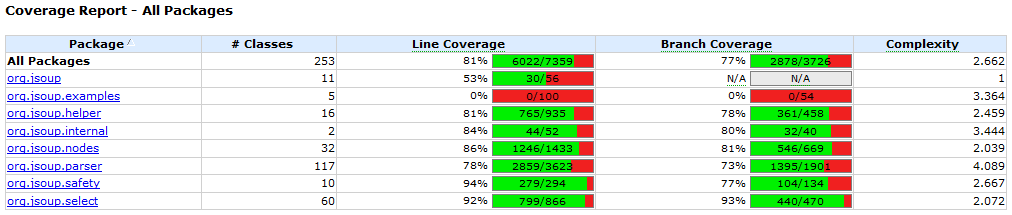

Note that the supplied test suite is of high quality, with 81% line coverage and 77% branch coverage overall.

EvoSuite includes some clear tutorials explaining its use. Mirror copies of hamcrest-core-1.3.jar and junit-4.12.jar are available if you need them.

Once you have EvoSuite installed you can invoke it on jsoup via:

$ cd jsoup-master

$ $EVOSUITE -criterion branch -target target/classes/

...

* Writing JUnit test case 'ListLinks_ESTest' to evosuite-tests

* Done!

* Computation finished

You can now find the EvoSuite tests in evosuite-tests/org/jsoup. You can run them all with maven; see the tutorial for more information.

Written Report

You must create a written PDF report reflecting on your experiences with automatic test generation. You must include your name and UM email id (as well as your partner's name and email id, if applicable). In particular:

- In a few sentences, your report should describe one test case that

AFL created that covered part of libpng that your manual test

suite from Homework 1 did not, and vice versa. (If no such tests exist,

for example because you seeded AFL with all of your manual tests, instead

describe what did happen. For example, if you seeded AFL with all of your

manual tests, then AFL's output will be a superset of yours.) You should

also indicate how many tests your run of AFL created, your total runtime,

and the final coverage of the AFL-created test suite (use the technique

described in Homework 1 to compute coverage; note that AFL will include

all of the original tests as well — yes, you should include those).

[2 points for AFL, 2 points for manual, 1 point for summary] - Your report should include, as inlined images, one or two

"interesting" valid PNG files that AFL

created and a one-sentence explanation of why they are "interesting".

[1 point for image(s), 1 point for explanation] - Your report should include a scatterplot in which the x axis

is "seconds" and the y axis is paths_total as reported in

the findings/plot_data CSV file produced by your run of AFL. You

can create this plot yourself (using the program of your choice, or even

the now-familiar jfreechart!). Your scatterplot must include

data reaching at least 510 paths_total on the y axis

— which means you must run AFL until you have at least that much

data. (See here for plot examples

that include much more detail than you are asked to include here. Note

that this is not own finds but is instead total

paths in the upper right corner.

Include a sentence that draws a conclusion from this scatterplot.

Note that it does not matter how many rows are in your plot_data file or if you are missing some rows at the start or middle as long as you got up to 510 paths_total — it is common to have fewer than 510 rows.

Note that if you suspended afl-fuzz you may have a big time gap in your plot. We do not care how you handle that (e.g., ugly graph, big gap, fudge the times, whatever) — any approach is full credit.

[2 points for plot, 1 point for conclusion] - Look at evosuite-report/statistics.csv and compare it to

target/site/cobertura/index.html. In a few sentences, compare

and contrast the branch coverage of the manually-created test suite to

the EvoSuite-created test suite.

[2 points for a comparison that does more than just list numbers] - Choose one class for which EvoSuite produced

higher coverage than the human tests (if no such class exists, choose

EvoSuite's "best" class). Look at the corresponding tests. In

one paragraph, indicate the class and explain the discrepancy. For

example, in your own words, what is EvoSuite testing that the

humans did not? Why is EvoSuite more likely to generate such

a test? What do you think of the quality of the tests? The readability?

Suppose a test failed. Would the test's failure help you find the bug?

[4 points for a convincing analysis that shows actual insight] - Choose one class for which EvoSuite produced lower coverage

than the human tests (if no such class exists, choose EvoSuite's

"worst" class). Elaborate and reflect as above, but also offer a

hypothesis for why EvoSuite was unable to produce such a test: bring

in your knowledge of how EvoSuite works.

[4 points for an analysis that shows insight, especially into EvoSuite's limitations] - In one paragraph, your report should compare and contrast your

observations (e.g., usability, efficacy, test quality) of AFL and

EvoSuite. List at least one strength of each tool and at least

one area for improvement. Which software engineering projects might

benefit from the use of such tools? Would you use them personally? Why or

why not?

[1 point for AFL strengths and weaknesses, 1 point for EvoSuite strengths and weaknesses, 3 points for insightful analysis]

This does not have to be a formal report; you need only answer the questions in the rubric. However, nothing bad happens if you include extra formality (e.g., sections, topic sentences, etc.).

There is no explicit format (e.g., for headings or citations) required. For example, you may either use an essay structure or a point-by-point list of question answers.

The grading staff will select a small number of excerpts from particularly high-quality or instructive reports and share them with the class. If your report is selected you will receive extra credit.

Submission

For this assignment the written report is the primary artifact. There are no programmatic artifacts to submit (however, you will need to run the tools to create the required information for the report). (If you are working with a partner, try to use the "Group" feature on Canvas and submit one copy of the report. However, nothing bad happens if you fail to do this and/or instead submit two copies.)

Commentary

This assignment is perhaps a bit different than the usual EECS homework: instead of you, yourself, doing the "main activity" (i.e., creating test suites), you are asked to invoke tools that carry out that activity for you. This sort of automation (from testing to refactoring to documentation, etc.) is indicative of modern industrial software engineering.

Asking you to submit the generated tests is, in some sense, uninteresting (e.g., we could rerun the tool on the grading server if we just wanted tool-generated tests). Instead, you are asked to write a report that includes information and components that you will only have if you used the tools to generate the tests. Writing reports (e.g., for consumption by your manager or team) is also a common activity in modern industrial software engineering.

FAQ and Troubleshooting

In this section we detail previous student issues and resolutions:

-

Question: Using AFL, get:

[-] SYSTEM ERROR : Unable to create './findings_dir/queue/id:000000,orig:pngbar.png'

Answer: This is apparently a WSL issue, but students running Linux who ran into it were able to fix things by making a new, fresh VM.

-

Question: Using AFL, I get:

[-] PROGRAM ABORT : Program 'pngtest' not found or not executable

or[-] PROGRAM ABORT : Program 'pngnow.png' is not an ELF binary

Answer: You need to use the right /path/to/pngtest instead of just pngtest. You must point to the pngtest executable (produced by "make") and not, for example, pngtest.png.

Question: When I try to run AFL, I get:

[-] PROGRAM ABORT : No instrumentation detected

Answer: You are pointing AFL to the wrong pngtest executable. Double-check the instructions near $ CC=/path/to/afl-gcc ./configure --disable-shared CFLAGS="-static" , rebuild pngtest using "make", and then point to exactly that executable and not a different one.

Question: When I am running AFL, it gets "stuck" at 163 paths.

Answer: In one instance, the student had forgotten the @@ at the end of the AFL command. Double check the command you are running!

Question: When I try to compile libpng with AFL, I get:

configure: error: C compiler cannot create executables

Answer: You need to provide the full path to the afl-gcc executable, not just the path to hw2/afl-2.52b/.

Question: Some of the so-called "png" files that AFL produces cannot be opened by my image viewer and may not even be valid "png" files at all!

Answer: Yes, you are correct. (Thought question: why are invalid inputs sometimes good test cases for branch coverage?)

Question: Cobertura suggests that the project has 3,726 branches, but EvoSuite seems to think they sum up to 5,149. What gives?

Answer: Good observation! Everything is fine. Double check the lecture slides. What are some ways in which two tools could disagree about the number of "branches" in the same Java classes?

Question: When trying to run EvoSuite, I get:

-criterion: command not found

Answer: This almost always indicates some sort of typo in your export EVOSUITE=... setup line.

Question: Can I terminatie EvoSuite and resume it later?

Answer: Unfortunately, no. I emailed the author who indicated that this is not currenltly possible.

Question: What does "interesting" mean for the report? Similarly, how should we "elaborate" or "reflect"?

Answer: We sympathize with students who are concerned that their grades may not reflect their mastery of the material. Being conscientious is a good trait for CS in general and SE in particular. However, this is not a calculus class. Software engineering involves judgment calls. I am not asking you to compute the derivatives of various polynomials (for which there is one known right answer). You are carrying out activities that are indicative of SE practices.

Suppose you are tasked with evaluating a test generation tool for your company. You are asked to do a pilot study evaluating such a tool and prepare a report for your boss. One of the things the boss wants to know is: "What are the risks associated with using such a tool?" Similarly for the benefits or rewards.