Research

My research interests include computer architecture and its interaction with software systems and device/VLSI technologies. A few current and past research projects are listed below.

In-Memory Computing:

Computer designers have traditionally separated the role of storage and compute units. Memories stored data. Processors’ logic units computed them. Is this separation necessary? A human brain does not separate the two so distinctly. Why should a processor? My work raises this fundamental question regarding the role of memory in computing systems and instead proposes to impose a dual responsibility on them: store and compute data.

Specifically, my recent work has produced innovative hardware designs for in-memory computing, which could have a significant impact on BigData computing system design and accelerate ML. My approach is a significant departure from traditional processing-in-memory (PIM) solutions, which merely seeks to move computation closer to storage units. The structure and functionality of memory itself is unchanged. Instead, my work seeks to turn storage units into computational units, so that you can compute without moving data back and forth between storage and computational units. This approach not only dramatically reduces the data movement overhead but also unlocks massive vector compute capability as data stored across hundreds of memory arrays could be operated on concurrently. The key advantage of our approach is that it allows data stored across hundreds to thousands of memory arrays to be operated on concurrently.

The end result is that memory arrays morph into massive vector compute units that are potentially one to two orders of magnitude wider than a modern graphics processor’s (GPU’s) vector units at near-zero area cost.

My group has led many projects in this research area including computational cache architectures, memristive computational memories, and generalized programming frameworks which can execute arbitrary data-parallel programs on in-memory architectures.

Precision Health:

Application or domain-specific processor customization is another approach to sustain growth in the post-Moore's law world. I am targeting precision health, a domain that is growing at a rate that is far outpacing Moore’s law. Sequencing costs have plummeted from $10 million to $1000 in just the last decade. It is expected to reduce to less than $100 in two years, at which point sequencing may become as common as blood tests. Countries like the UK plan to sequence all of their citizens by 2025, producing massive amounts of data. To put this challenge in perspective, Facebook is estimated to store about 0.5 GB per user. Sequence data needs 300 GB per human genome. One blood test for liquid biopsy produces ~1 TB. Custom hardware that can efficiently analyze these large volumes of data is crucial to the advancement of precision health.

Towards this end, my group has built several novel hardware accelerators for genome sequencing and COVID detection. We have verified chip prototypes and F1 FPGA cloud prototypes for our accelerators. Our novel read-alignment techniques are now part of Broad Institute / Intel's official BWA-MEM 2 software. BWA-MEM is the de-facto genomics read alignment tool used by researchers and practitioners worldwide.

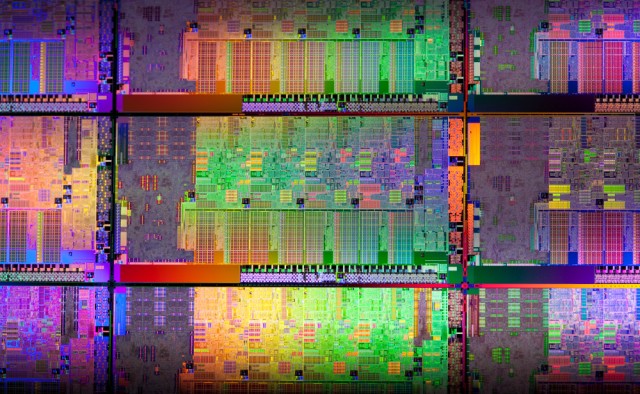

Heterogeneous Processor Microarchitecture:

We designed a novel energy proportional core micro-architecture, namely the Composite Core, by pushing the notion of heterogeneity from the traditional definition of between cores to within a core. Composite core fuses a high-performance big out-of-order 𝝁Engine with an energy-efficient little in-order 𝝁Engine to achieve energy proportionality. An online controller maps high-performance phases to the big 𝝁Engine and low-performance phases to the little Engine. By sharing much of the core's architectural state between the two 𝝁Engines, we reduced the switching overhead to near zero, which enabled fine-grained switching. We demonstrated significant advantages of our fine-grain heterogenous architecture over competing for power-saving techniques such as fine-grain DVFS mechanisms. We improved our online controller using trace-based predictors. We also observed that the majority of big's performance advantages are due to out-of-order scheduling of instructions and that these schedules tend to repeat. Instead of wasting energy in recreating identical schedules in the big, we proposed to remember the out-of-order schedules observed in the big and execute any future instances in the little. This enabled little to achieve big's performance for memoized schedules, but at a fraction of big's energy. Our technologies have been patented by ARM Ltd through several patents.

Network-on-Chip:

A scalable communication fabric is needed to connect hundreds of components in a many-core processor. Network-on-Chip (NoC) as opposed to ad-hoc point-to-point interconnects is widely viewed as the de facto solution for integrating the components in a many-core architecture due to their highly predictable electrical properties and scalability. Conventionally, NoCs have been designed to optimize for network-level metrics (such as throughput, latency, and bisection bandwidth) without considering the characteristics of applications running at the end nodes. An earlier part of my research showed that such application-oblivious NoC designs can lead to sub-optimal performance, fairness, and power consumption. My dissertation addressed this inefficiency by adopting a system-level design approach that exploited various application characteristics to design high-performance and energy-efficient NoCs. Subsequently, I developed novel 3D NoC architectures, energy proportional NoCs, and scalable NoC topologies.