Autograder Tutorial

What is Autograder?

-

Autograder.io is an open-source automated grading system that lets programming instructors focus on writing high-quality test cases without worrying about the details of how to run them. Autograder.io is primarily developed and maintained at the University of Michigan’s Computer Science department, where it supports 4600 students per semester spread across a dozen courses. (from Autograder Github page)

-

It allows you to use test your implementations of each assignment in a consistent/independent environment.

-

Of course, it is free to use!.

Policies (top)

- Each assignment will give 3~5 times to run your codes per day, to prevent for anyone overfitting their implementation to the test cases only.

- You cannot submit/grade more once you reach the daily limit, and you cannot shift your daily limit to the next day.

- The daily quota is automatically refreshed based on the US/EST timezone.

- Some parts of the assignment will be graded by-hand. The autograder score is not the final score you will receive for the assignment.

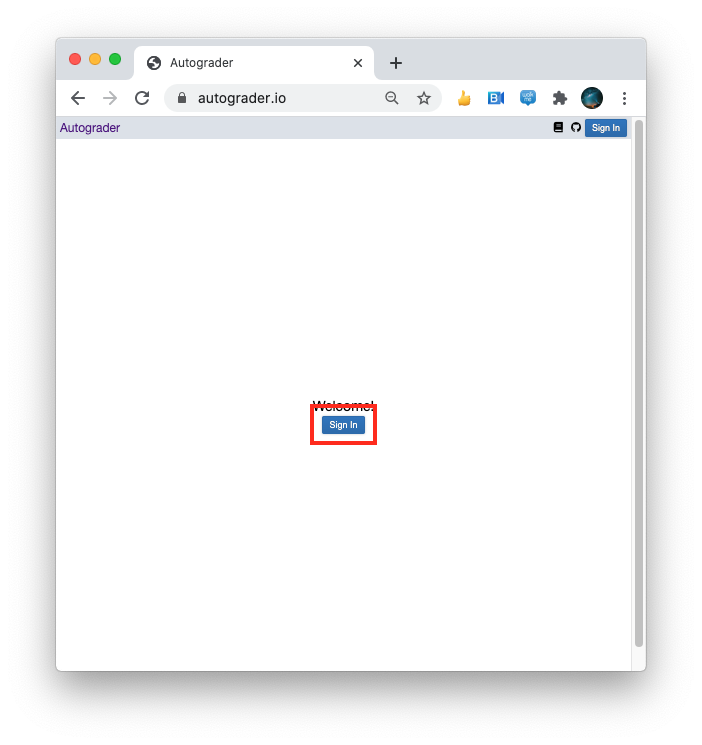

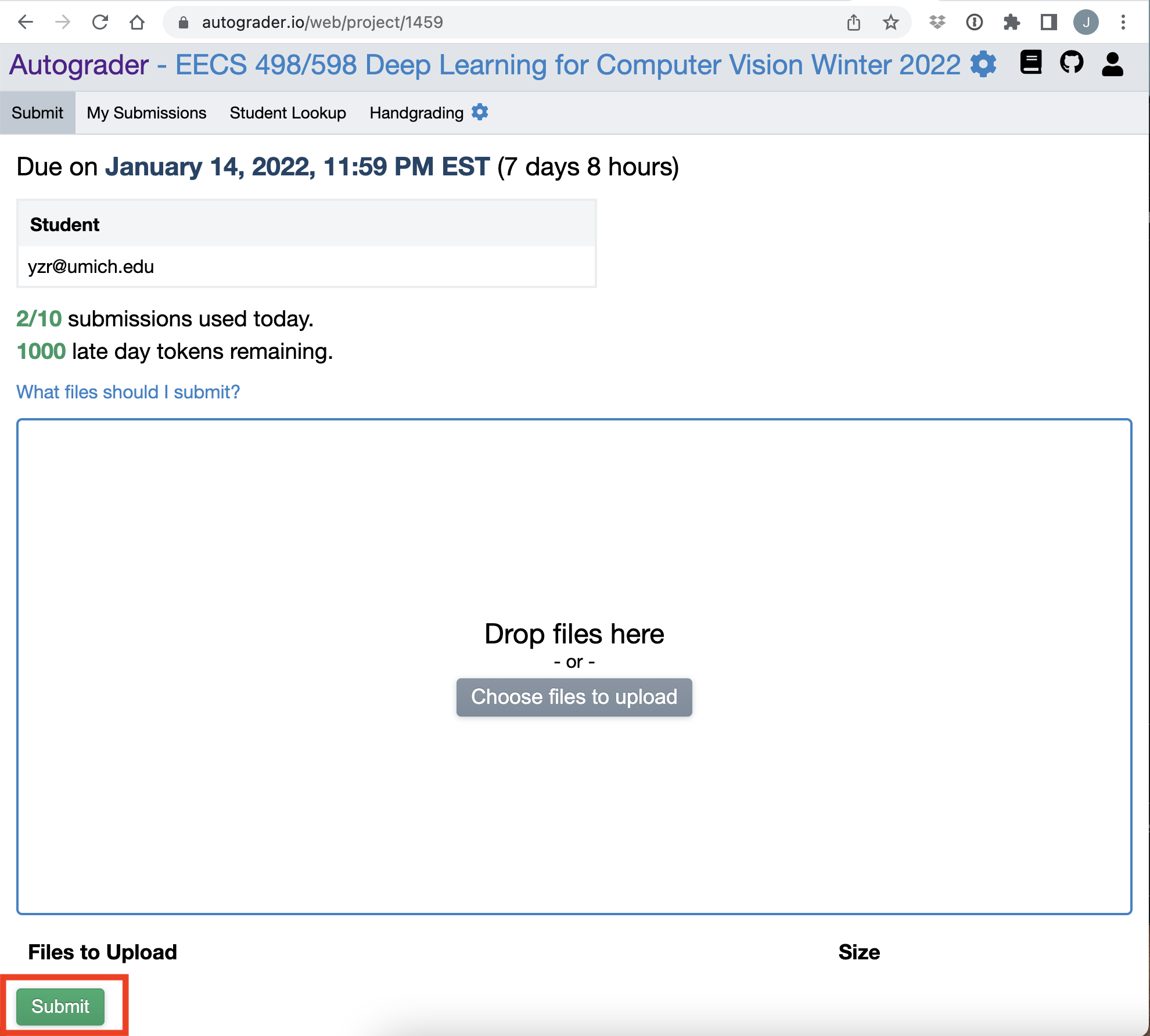

Steps to use (top)

- log into the Autograder.io page.

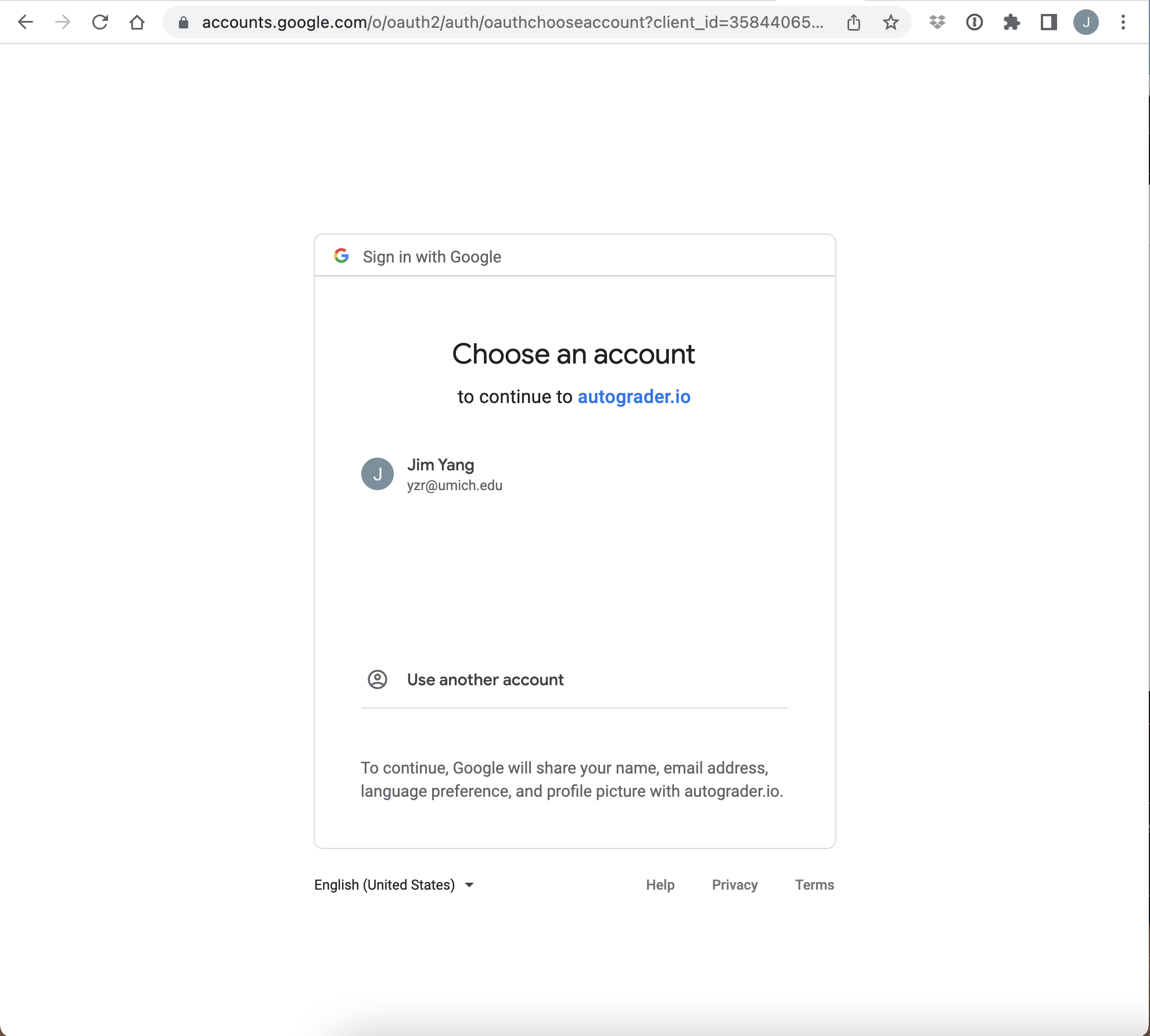

- Log in with your UMich G Suite account.

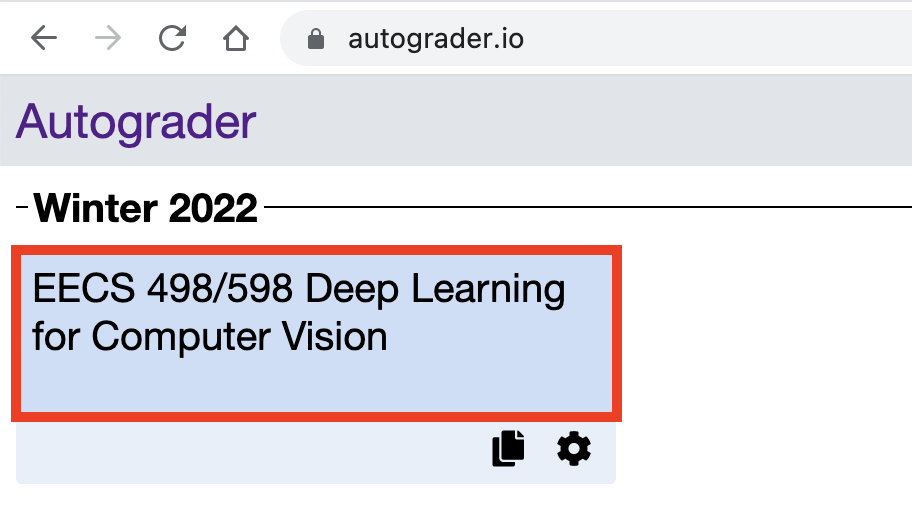

- Select EECS498/598 Deep Learning for Computer Vision course

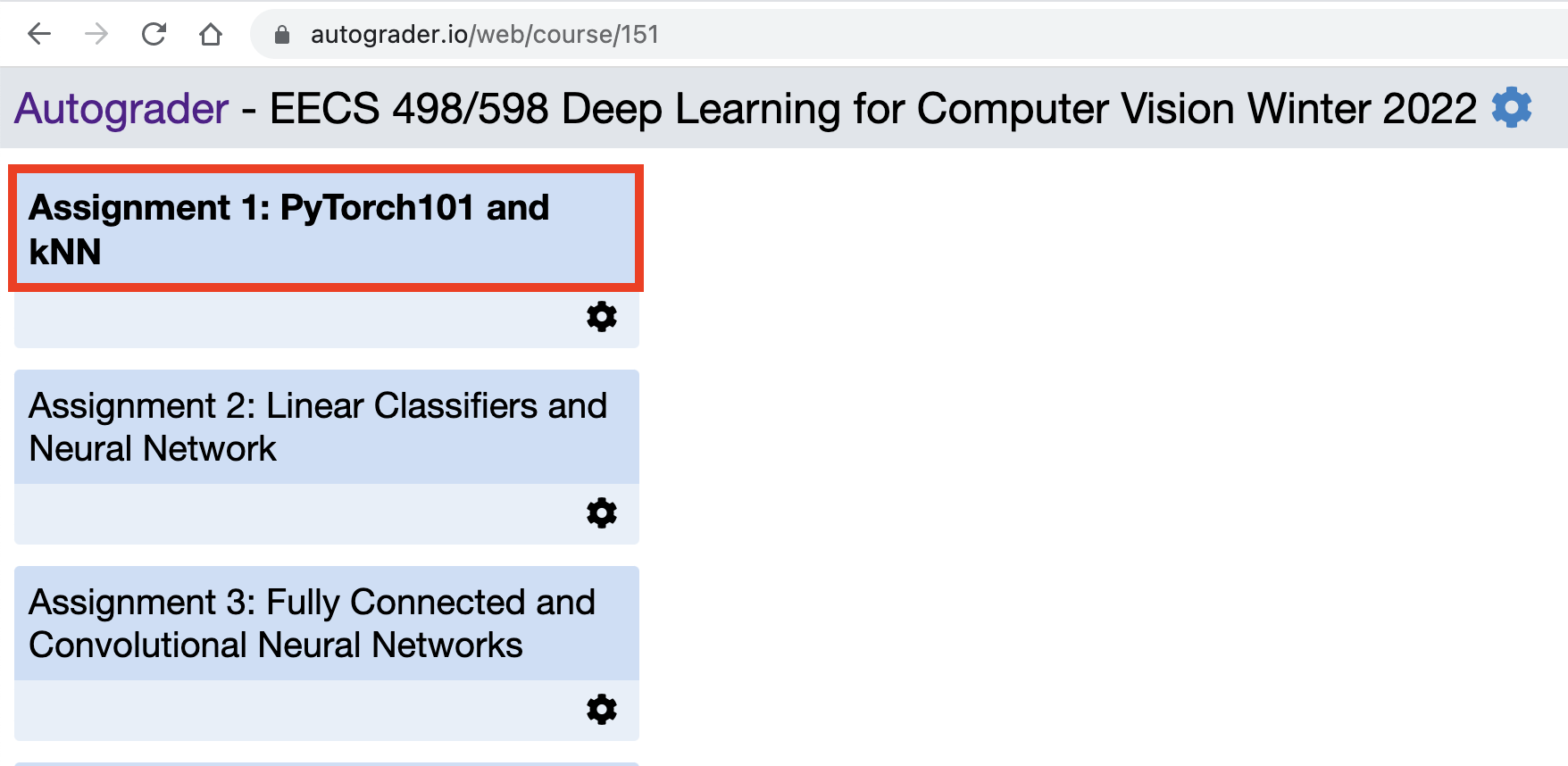

- Choose an assignment that you want to grade

- Drop or select files that you want to grade

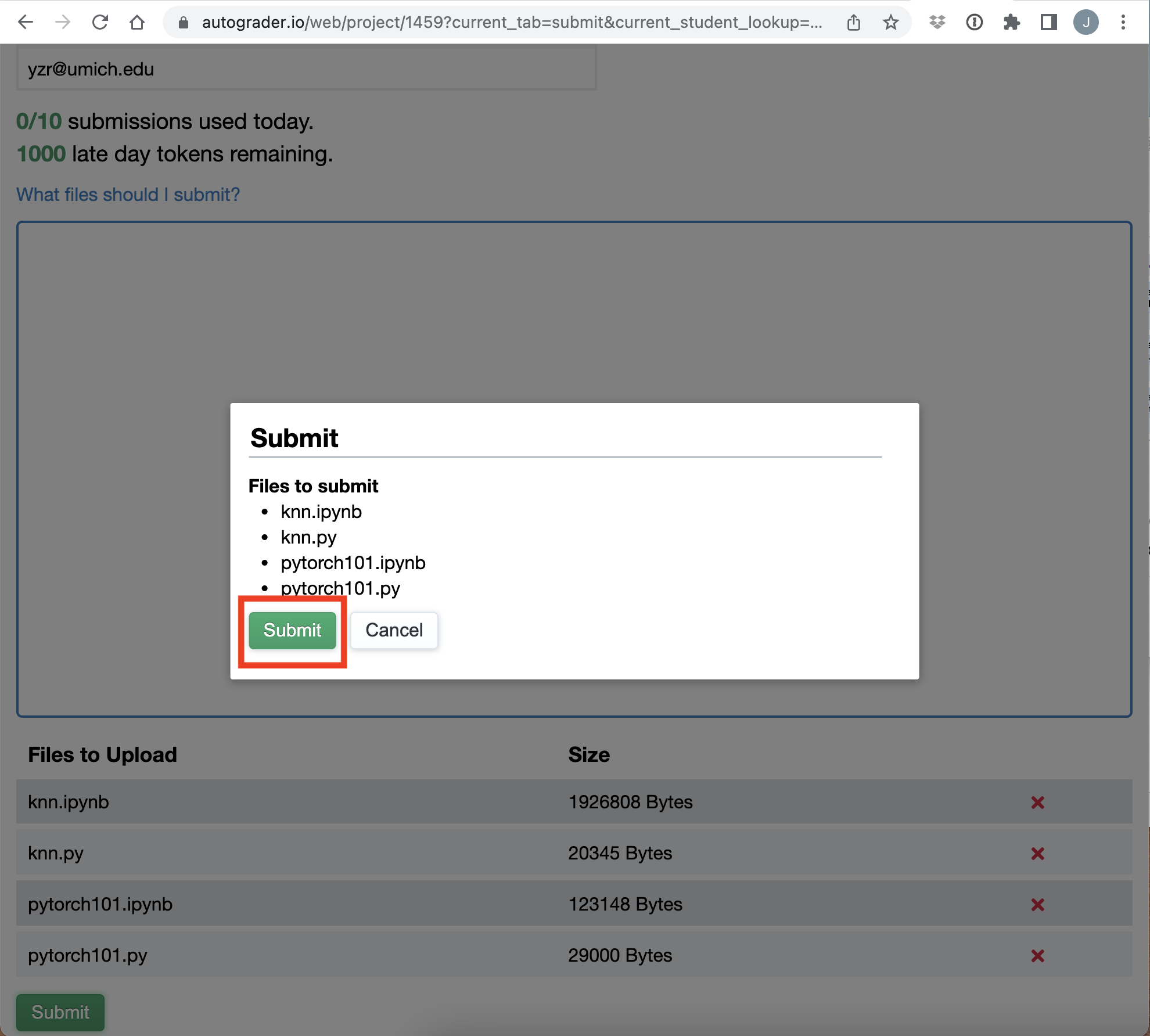

- Check the files that you’ve selected and press submit button

- Once again, select ‘submit’ to start grading

- You will get an automated email once your submission is received.

- FAQ

-

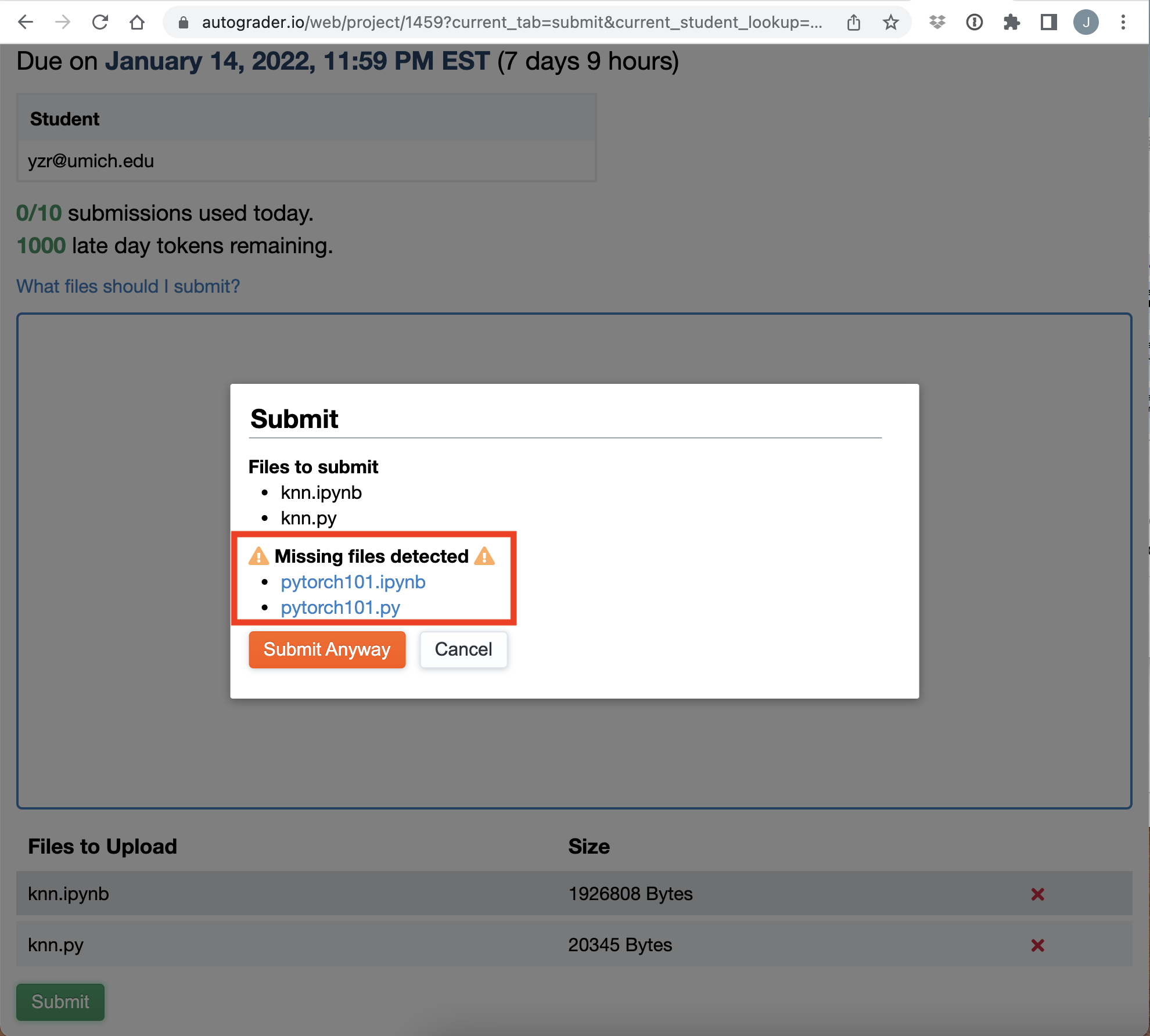

Q: How I can catch which files I need to submit? or… What happen if I forgot to add some/all of the files?

A: Autograder will let you know that you’ve missed some files to add.

-

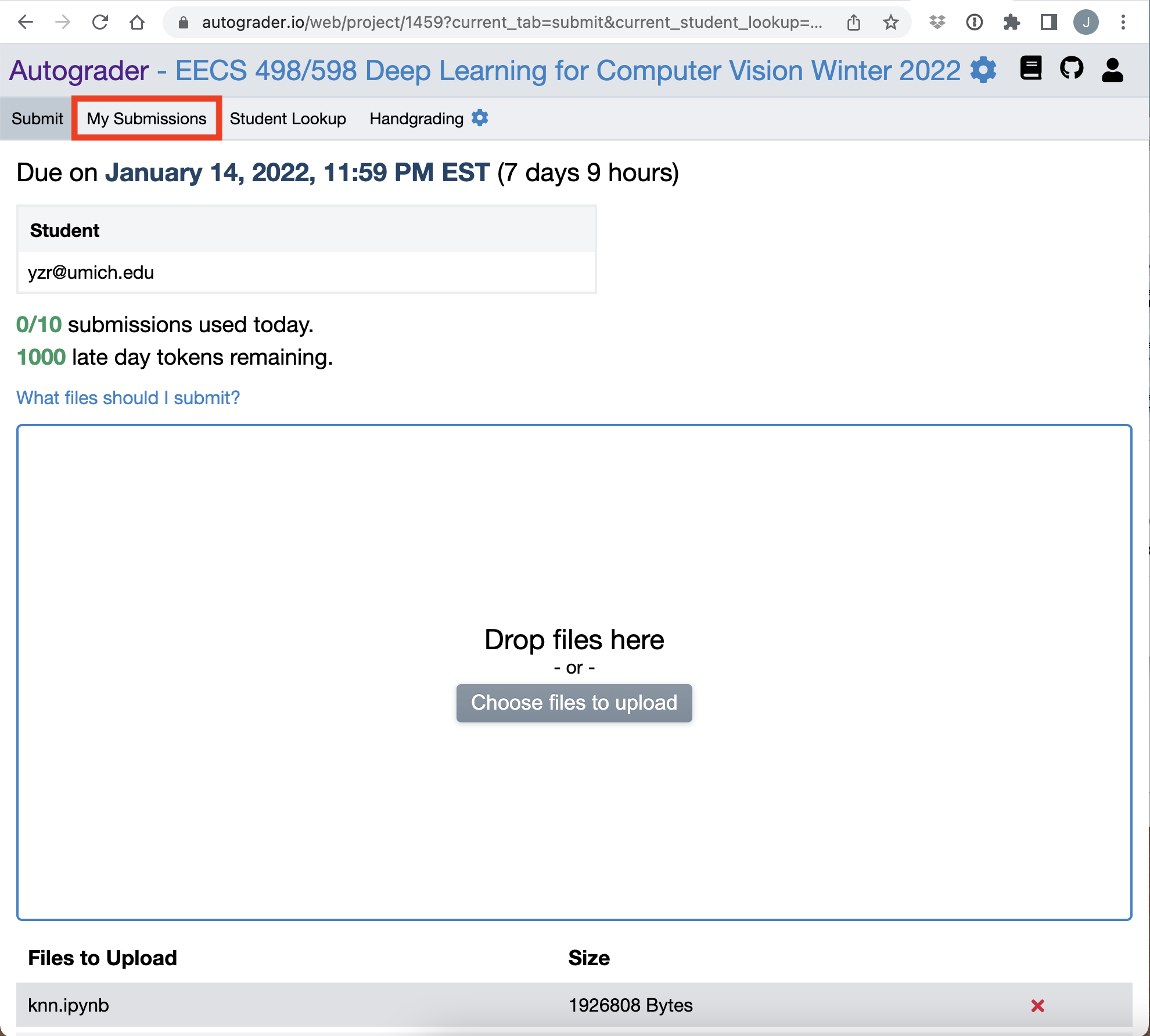

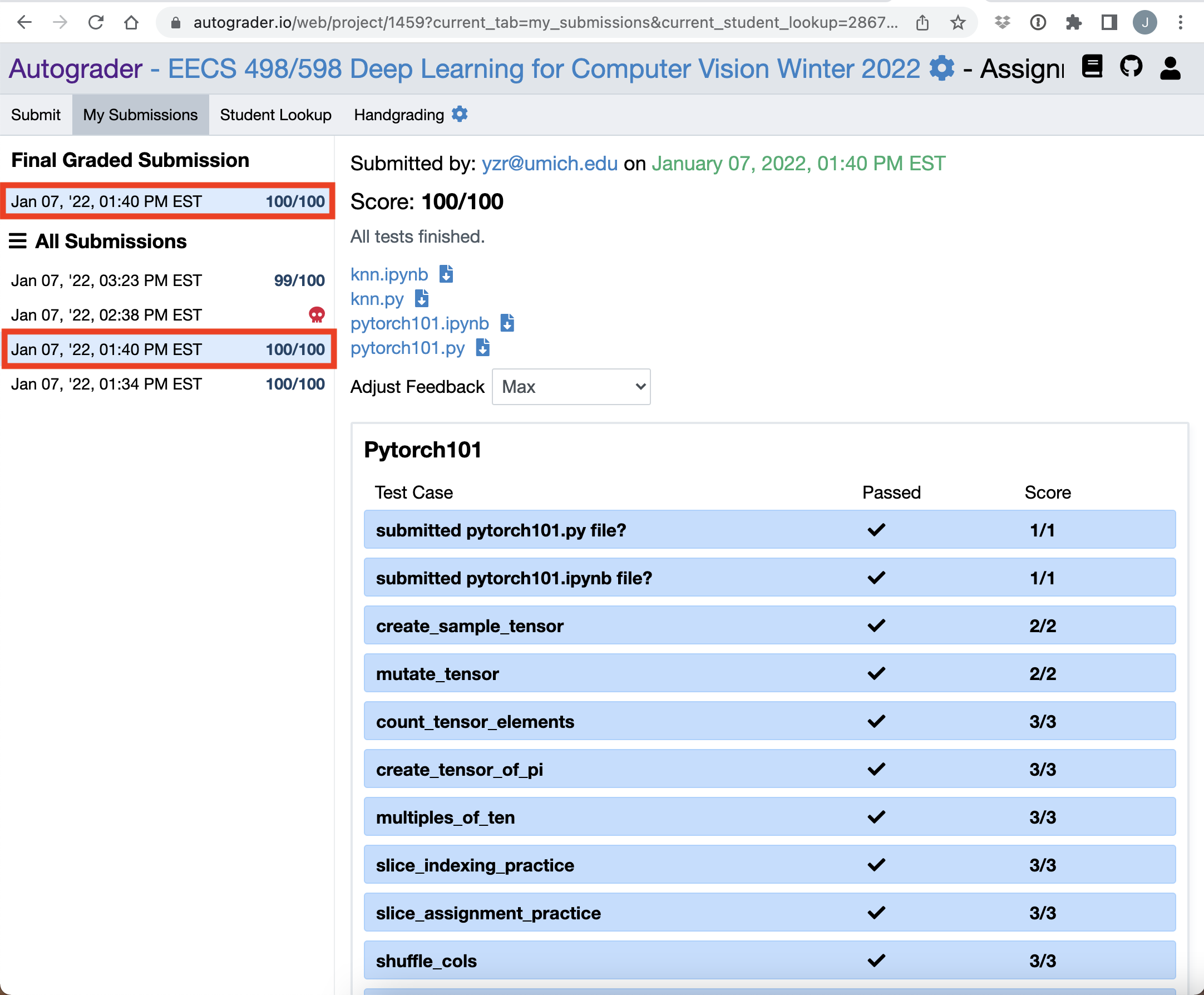

How to check your result (top)

After you have submitted your .py code and .ipynb files, you can check the grade on the web. In the Assignment page (step 4 of the previous tab)

-

Select My Submissions

-

On the top left, you can see your final score (the latest submission result). The Autograder will select the highest score among all submissions.

-

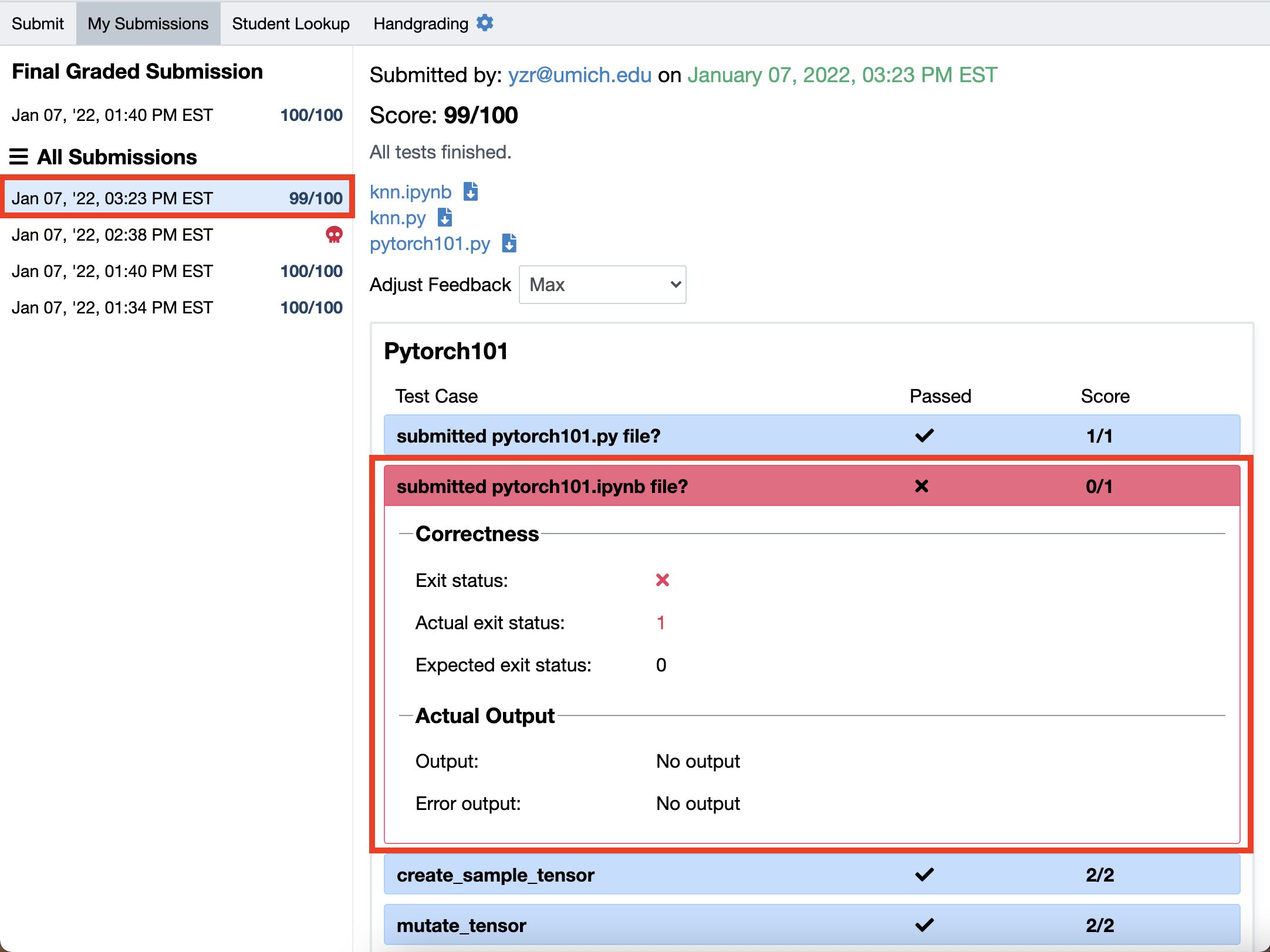

If you want to check why/where you lose some points, then you can trace the reason for each test case sample with the error message.

- FAQs:

-

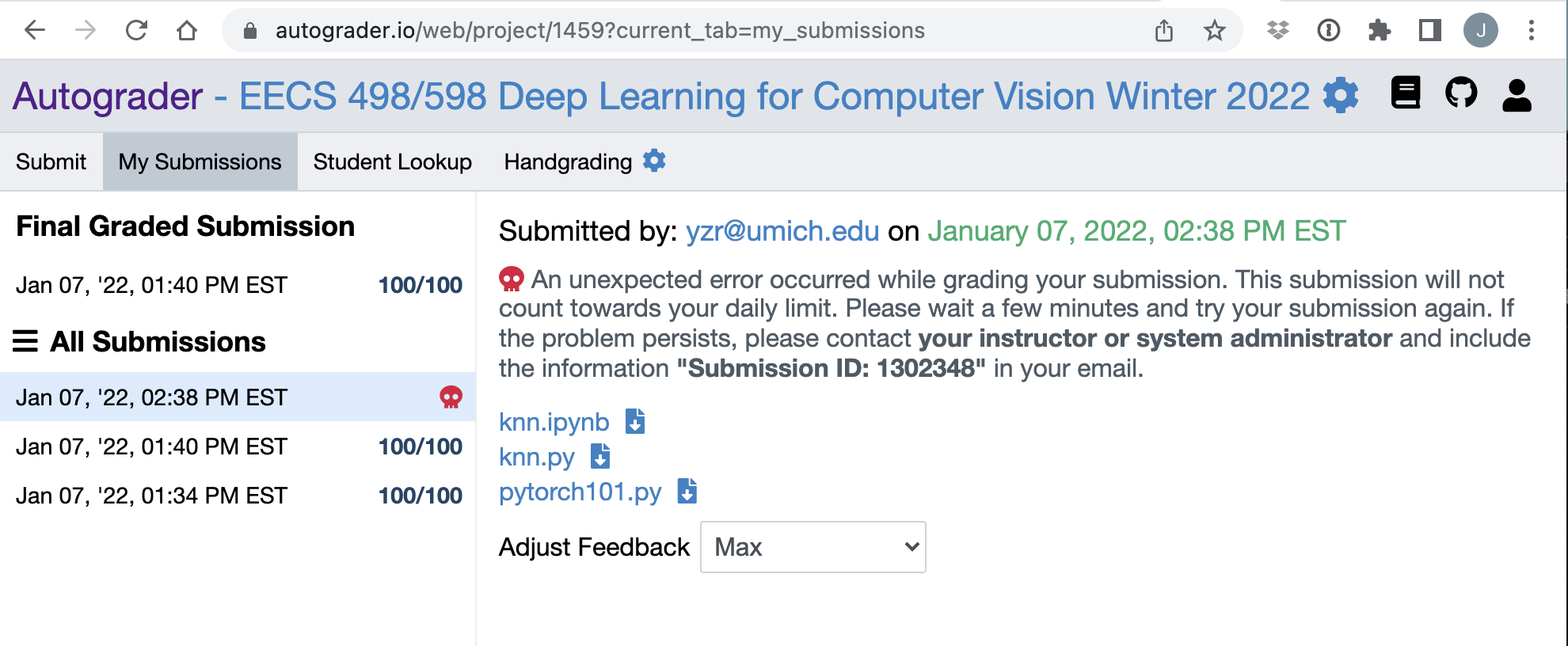

Q: What happens if the server is not working?

A: You will see an error note like this, and it would not count as a valid submission. You can resubmit once again. If you face a similar error multiple times, then please post this issue in Piazza.

-

Q: What should I do if I think the evaluation is wrong?

A: Please post this issue in Piazza. Once we figured out that our evaluation script has an error, then we will re-grade your submission from our end (without consuming your quota), and the score will be updated once the re-grading is done.

-